Key Points

Attack surface management (ASM) is the continuous process of discovering, assessing, and reducing every digital asset an attacker can reach — including systems that are unknown or unmanaged.

Many breaches don't start with a vulnerability. Often, an exposed admin panel or database is all an attacker needs to gain a foothold. Earlier this year, hundreds of FortiGate firewalls were breached without a single zero-day, just weak passwords guessed on internet-exposed admin panels.

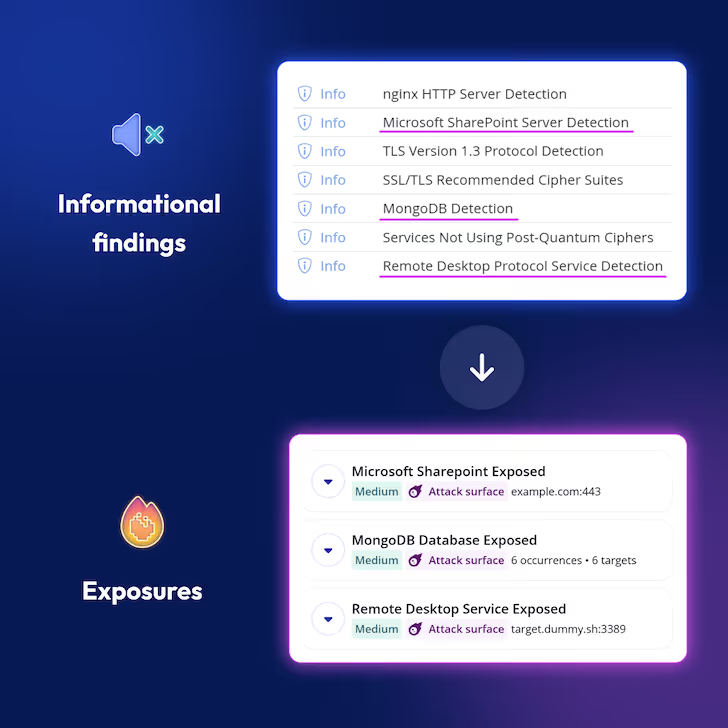

Despite many high-profile breaches of a similar nature, our 2026 Attack Surface Management Index found that 60% of organizations have an exposed HTTP panel, over a quarter have a publicly-facing MySQL database, and 11% have Remote Desktop exposed to the internet. Traditional vulnerability management misses all of this because there's no CVE for exposed infrastructure.

This guide explains what ASM is, why it matters, and how to do it properly.

What is an attack surface?

Your attack surface is everything an attacker can reach — every server, subdomain, API, login page, and cloud service your organization has exposed to the internet, whether you know about it or not.

It changes continuously — every time a new service gets deployed, a subdomain is created, or a third-party integration is added, your exposure grows. It includes assets on-premises, in the cloud, in subsidiary networks, and in third-party environments.

Examples of external attack surface

- External-facing systems: web apps, APIs, login portals, subdomains, IP addresses

- Third-party integrations: SaaS tools holding your data, vendor portals, partner APIs

- Shadow IT: systems deployed without IT's knowledge

Where does the attack surface stop?

If you use a SaaS tool like HubSpot, they will hold a lot of your sensitive customer data, but you wouldn’t expect to scan them for vulnerabilities — this is where a third-party risk platform comes in. You would expect HubSpot to have many cyber security safeguards in place — and you would assess them against these.

Where the lines become blurred is with external agencies. Maybe you use a design agency to create a website, but you don’t have a long-term management contract in place. What if that website stays live until a vulnerability is discovered and it gets breached?

In these instances, third party and supplier risk management software and insurance help to protect businesses from issues such as data breaches or noncompliance.

What is attack surface management?

Attack surface management is the continuous process of discovering every internet-facing asset your organization has, assessing what's exposed, and reducing that exposure, before an attacker finds it first.

Exposure can mean two things: current vulnerabilities like missing patches or misconfigurations, but also exposure to future vulnerabilities. An admin interface like cPanel or a firewall management page may be secure today — but a vulnerability could be discovered tomorrow, at which point it immediately becomes a critical risk. ASM says get it off the internet before it becomes a problem, rather than waiting for a CVE to force your hand.

Exposure without a vulnerability is still a risk. A firewall admin panel on the internet can be targeted through credential reuse — an attacker who finds login details elsewhere will try them against any exposed interface they can find. Or they'll run a slow, low-volume password guessing exercise that flies under the radar but eventually yields results.

When a vulnerability does exist, anything exposed is immediately at risk. Take MongoBleed (CVE-2025-14847) — it allowed unauthenticated attackers to steal credentials, API keys, and session tokens directly from server memory. At the time of disclosure, more than 87,000 MongoDB instances were publicly exposed that had no reason to be. Every one of them was at risk the moment the CVE was published.

Reducing your attack surface today makes you harder to attack tomorrow.

How does ASM differ from vulnerability management?

Traditional vulnerability management is reactive — it waits for a vulnerability to be discovered, then tells you to patch it. ASM is proactive — it asks whether something should be exposed in the first place, before a vulnerability exists.

Take a firewall admin panel. Vulnerability management will scan it for known CVEs. ASM will ask why it's on the internet at all — and flag it as a risk regardless of whether a CVE exists. If a vulnerability is discovered tomorrow, every exposed instance is immediately at risk. ASM removes that risk before it materialises.

The two work best together: ASM finds what's exposed and shouldn't be, vulnerability management finds what's wrong with what remains.

How to secure your external attack surface

ASM is a continuous cycle. Your attack surface changes every time a developer spins up a new service, a subdomain gets created, or a third-party integration is added. The process has to keep pace with that.

- Discover: Find every asset that needs protecting. The goal is to surface not just your known infrastructure, but any unknown subdomains, APIs, login pages, cloud services, and anything else that's reachable from the internet.

- Evaluate: For each discovered asset, assess what's actually at risk. Is an admin panel publicly accessible? Is a database exposed that has no reason to be? Is software running that hasn't been patched? Evaluation isn't just about finding CVEs — it's about understanding the exposure.

- Mitigate: Act on what you find. That might mean patching a vulnerability, closing an unnecessary port, taking an admin interface off the internet, or simply decommissioning a forgotten asset. The aim is to reduce both current exposure and the blast radius of any future vulnerability.

For more detail, watch our attack surface reduction workshop (or read the summary here).

Why attack surface management is important

Most organizations are managing a larger attack surface than they realize — and the gap between what they think they have and what's actually exposed is where breaches happen. These are some of the reasons why that gap keeps growing.

Why asset management is harder than it looks

Knowing what you have sounds simple. In practice, most organizations have assets they've forgotten, inherited, or never knew existed.

Often considered the poor relation of vulnerability management, asset management has traditionally been a labor intensive, time-consuming task for IT teams. Even when they had control of the hardware assets within their organization and network perimeter, it was still fraught with problems.

If just one asset was missed from the asset inventory, it could evade the entire vulnerability management process and, depending on the sensitivity of the asset, could have far reaching implications for the business.

This was the case in the Deloitte breach in 2016, where an overlooked administrator account was exploited, exposing sensitive client data.

When companies expand through mergers and acquisitions, they often take over systems they’re not even aware of — take the example of telco TalkTalk which was breached in 2015 and up to 4 million unencrypted records were stolen from a system they didn’t even know existed.

It's a challenge we see regularly with fast-growing companies. See how Brainlabs used Intruder to stay on top of their attack surface as they scaled through acquisitions.

How cloud adoption expanded the attack surface

Cloud platforms like AWS, Azure, and Google Cloud put infrastructure creation directly in the hands of development teams — bypassing the change control processes that security teams relied on to maintain visibility.

While this is great for speed of development, it creates a growing blind spot: new services get spun up, shadow IT proliferates, and security teams are left trying to keep pace with an attack surface that changes faster than they can track it.

Why attack surface management is more urgent than ever

The window between a vulnerability being disclosed and attackers actively exploiting it was months just two years ago. Now it can be a single day. AI models like Anthropic's Mythos can autonomously discover zero-day vulnerabilities. Any software unnecessarily facing the internet is about to get a lot more attention from attackers. The teams that will weather this best aren't the ones scrambling to react — they're the ones who have ASM built into their processes, so their unnecessary exposure is as minimal as possible.

.avif)

What attack surface management tools do

ASM tools automate the discovery and monitoring of your external attack surface — finding assets you didn't know you had and flagging exposures before attackers find them.

A good example: an Intruder customer once told us we had a bug in our cloud connectors — our integrations that show which cloud systems are internet-exposed. We were showing an IP address that he didn’t think he had. But when we investigated, our connector was working fine — the IP address was in an AWS region he didn’t know was in use.

What to look for in an ASM solution

Building an ASM strategy means going beyond known assets to find your unknowns, adapting to a constantly changing threat landscape, and focusing on the risks that will have the greatest impact on your business. Here are five things to look for in a good attack surface management solution.

- Continuous monitoring, not periodic scans: Look for a solution that detects changes to your attack surface as they happen — like a port opening or new service going online — and scans automatically. Intruder monitors your attack surface continuously and triggers scans whenever something changes.

- Discovery that goes beyond what you already know: One of the most important parts of ASM is finding unknown assets. Look for asset discovery capability that finds things like cloud accounts, subdomains, related domains, APIs, and login pages. Intruder finds assets the way an attacker would, surfacing things your internal inventory doesn't have.

- Proactive response to emerging threats: When a critical vulnerability drops, you need to know within hours whether you're affected — not after your next scheduled scan. Look for a tool that proactively scans your environment when new threats emerge. Intruder's emerging threat scans run automatically when a new high-impact CVE is published.

- Cloud integration: If your infrastructure lives in AWS, Azure, or Google Cloud, your ASM tool needs to know about it. Look for native cloud integrations that automatically pull in new assets as they're created. Intruder integrates directly with your cloud accounts so new services are discovered and scanned without manual intervention.

- Results your team can actually act on: An ASM solution that surfaces hundreds of undifferentiated findings creates noise, not security. Look for prioritization that reflects real exploitability. Intruder reduces noise and surfaces what needs fixing first based on real risk.

Get started with attack surface management

If software has no reason to be on the internet, patching shouldn't be your first line of defense — removing the exposure should be. That's what ASM is for.

Intruder's EASM solution continuously monitors your external attack surface, finds what you didn't know you had, and tells you what actually needs fixing before an attacker finds it first. Book a demo to see what's exposed in your environment.