TL;DR

- The AI infrastructure we researched is more vulnerable, exposed, open, and misconfigured on average than any other software we’ve ever investigated

- 1,652 Ollama APIs exposed with no authentication, of 5,200+ exposed to the internet

- 92 Flowise instances exposed agentic workflows, prompts and integrations, of 2,650+ exposed to the internet.

- 25 Langflow instances did not implement authentication, of 300+ exposed to the internet.

- 24 Open WebUI instances lacking authentication, of 12,000+ exposed to the internet.

- New and undisclosed vulnerabilities in AI products with no CVE

- Our sampling of one AI tool found that over 90% of instances had serious known vulnerabilities. When we checked again a week later, a new RCE had been found overnight, so none at all were patched

- A wide range of other niche AI tools not worth mentioning here, exposed and wide open on the internet.

We’d like to think the industry has made great strides over the past few decades, learning to deliver products with secure patterns that ultimately result in better security for organisations and end-users. However, the furious pace of AI adoption, driven by extreme FOMO and the need for business to beat competitors to the punch is apparently ruining all this hard work.

AI tooling is being adopted at an incredible pace. Often touted as a ‘force multiplier’ - the draw for businesses aiming to deliver more value faster is undeniable. With adoption, capability and quality all rising, many businesses are looking to host their own LLM infrastructure. It’s important to proceed with caution.

In the wake of the ClawdBot fiasco, which has averaged an eye-watering 1.5 CVEs per day, it was high time for us to scope out the overall AI attack surface, investigate whether strong security principles have been baked in, and report back on what we find. As you'll see from this research piece, secure practices are being undone by eagerness to deliver tools as quickly as possible.

We scanned 1 million exposed AI targets

Scanning the whole internet for a specific set of technologies is a challenge. But by using tried-and-tested OSINT data sources (such as the Certificate Transparency (CT) logs), we can reliably pull a large slice of the internet to scan by drawing on human tendency to name things based on what they are. We grabbed a range of generic AI-adjacent subdomains to scan, including ai.* and llm.*,along with a list of subdomains relating to a handful of popular self-hosted AI infrastructure. We pulled just over 2 million hosts with a total of ~1 million exposed services for scanning. Due to this sampling method relying on naming convention, it should be clear that this is merely a slice of what’s out there.

What we found was… Not pretty

The concerning state of AI security

Very quickly, we noticed an alarming number of hosts appearing to be in a freshly installed setup, without any authentication. Digging into these projects and reading the source code, it became clear that authentication was not enabled in a default state. This manifested itself in unintended exposure of real user data and company tooling that would have serious implications if exploited, whether that be reputational damage or full blown compromise.

Freely accessible chatbots

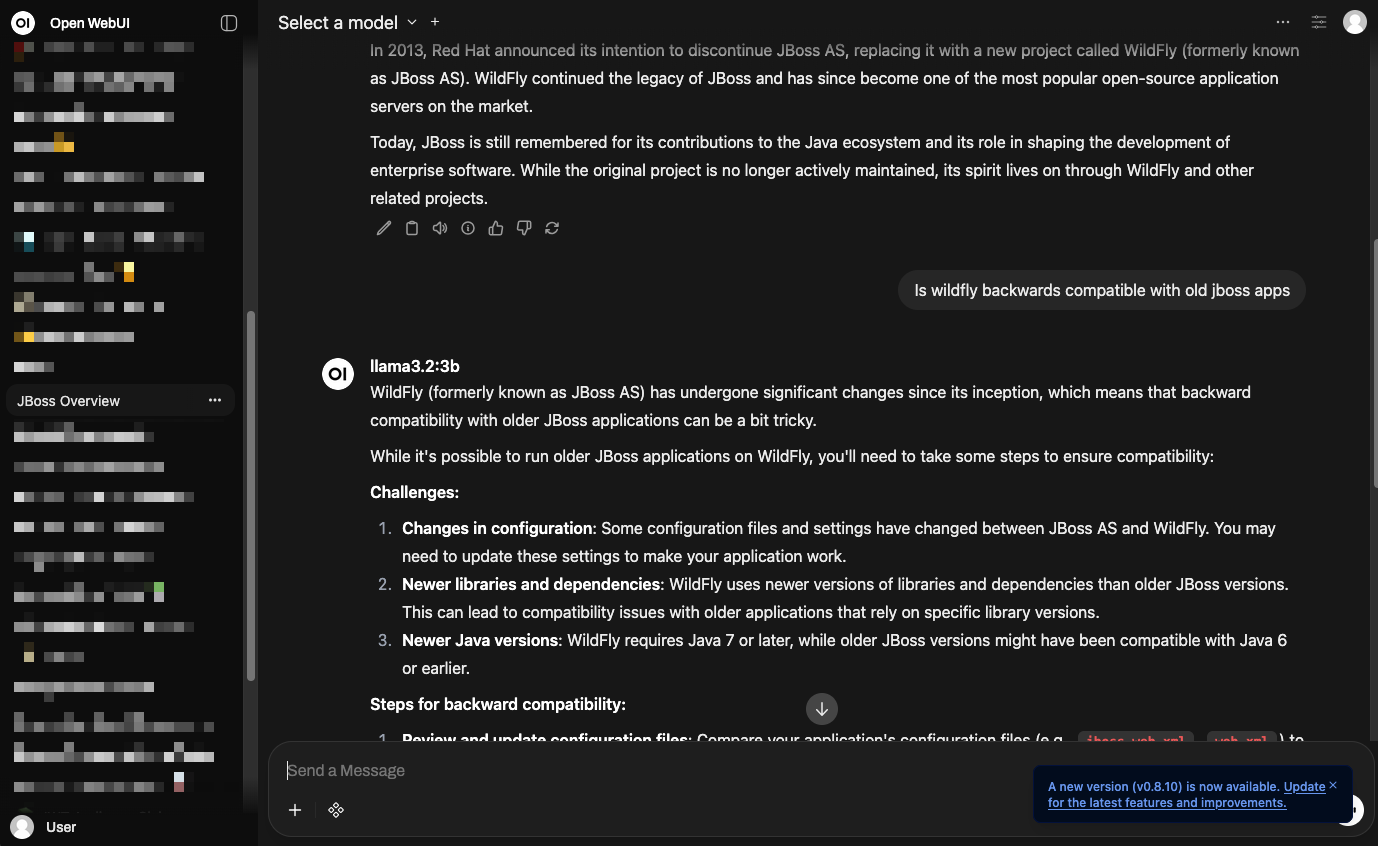

Our first example is a chatbot based on OpenUI that exposed a user’s LLM conversations learning about JBoss and WildFly:

Relatively innocent at first glance, but browsing through chat history could reveal a lot of sensitive information, especially in enterprise infrastructure. And “old JBoss apps” in their message screams enterprise over personal use.

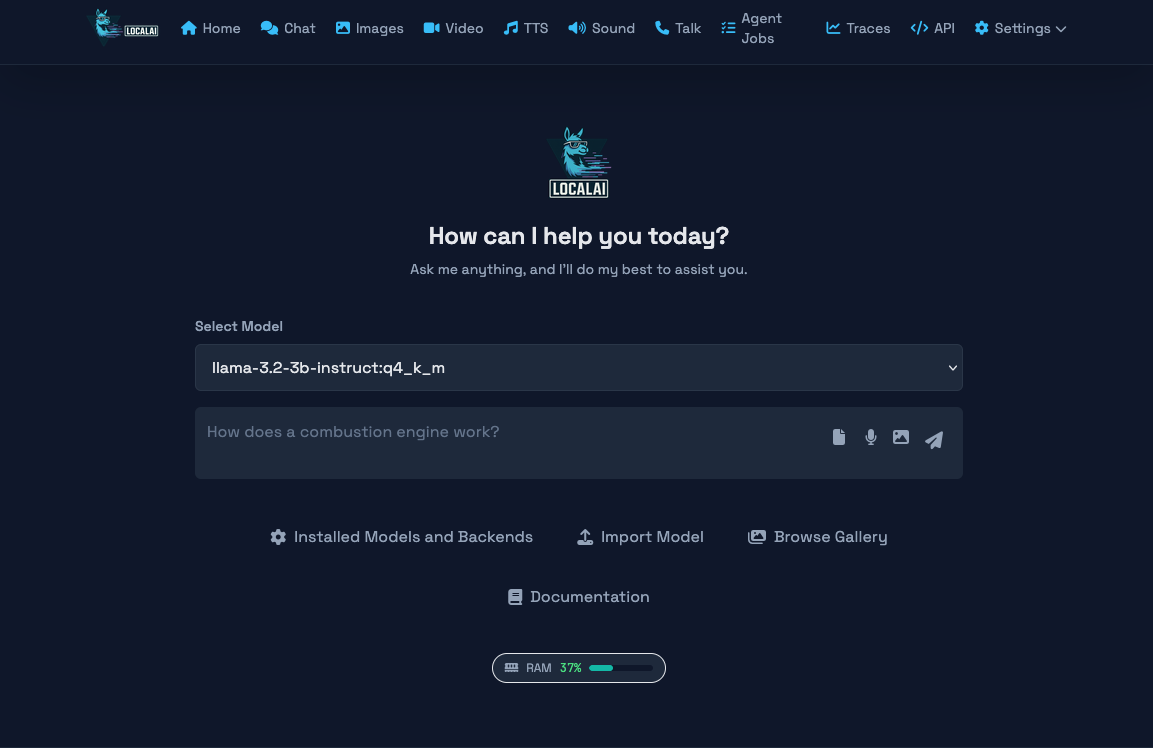

There were many instances which were freely available to use, many were clearly designed as support chatbots to be embedded on a website and these implemented sound guardrails. However, some were generic chatbots hosting a range of software and offered up a wide range of models, including multimodal LLM’s:

Leaving models freely available on the internet hosted on capable hardware poses a risk, as malicious users can jailbreak most models for nefarious reasons, such as the creation of illegal imagery, or asking for advice with intent to commit a crime. These malicious users would be free to produce this content without fear of repercussions, since they are using someone else’s infrastructure that’s been left wide open.

This method of abusing other people’s chatbots is no longer a hypothetical situation either, people are finding creative ways to utilise company chatbots to gain access to more complex models without needing to pay or have their requests logged to their accounts.

There were some other, very questionable chatbots online which were also exposing a large volume of personal NSFW chats (this was a challenging day at work!). And if that wasn’t bad enough, the software running the Claude-powered goon-bots also disclosed their API keys in plaintext.

Wide open agent management platforms

Exposures weren’t limited to chatbots either – we discovered serious agent management platforms like n8n and Flowise putting businesses at risk. The impact of these systems being open varies, but it can be particularly dangerous when external tooling is connected (a common practice). There’s a distinct absence of proper access management controls with AI tech, meaning once you have access to a bot that’s been integrated with a third-party system, you often have the keys to the castle and all the data it contains.

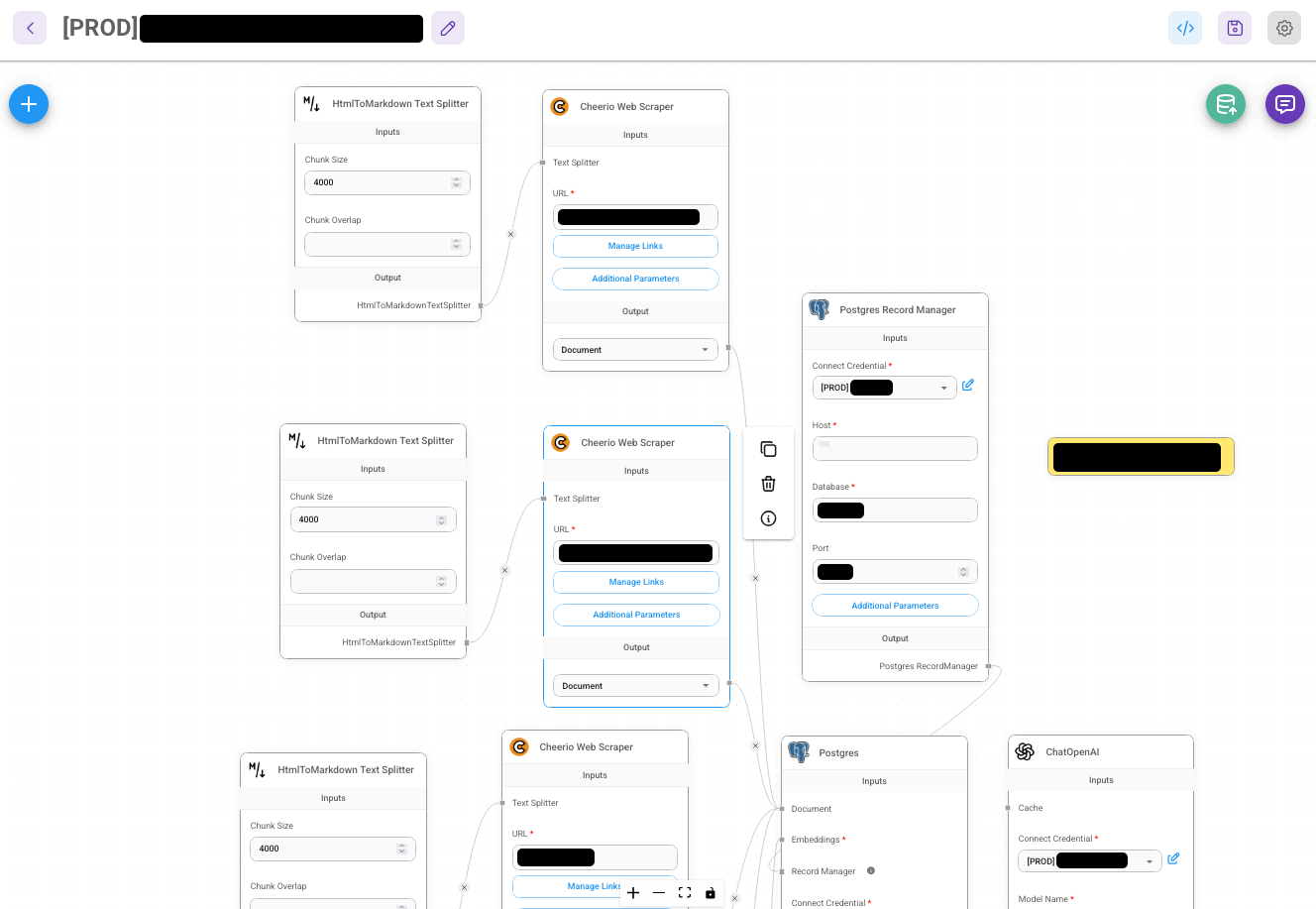

Some instances that users clearly thought were internal had been set up and exposed to the internet without authentication. One of the most egregious examples was a Flowise instance that exposed the whole business logic of an LLM chatbot service. The workflows exposed the individual personalities that are used by businesses that use their tool:

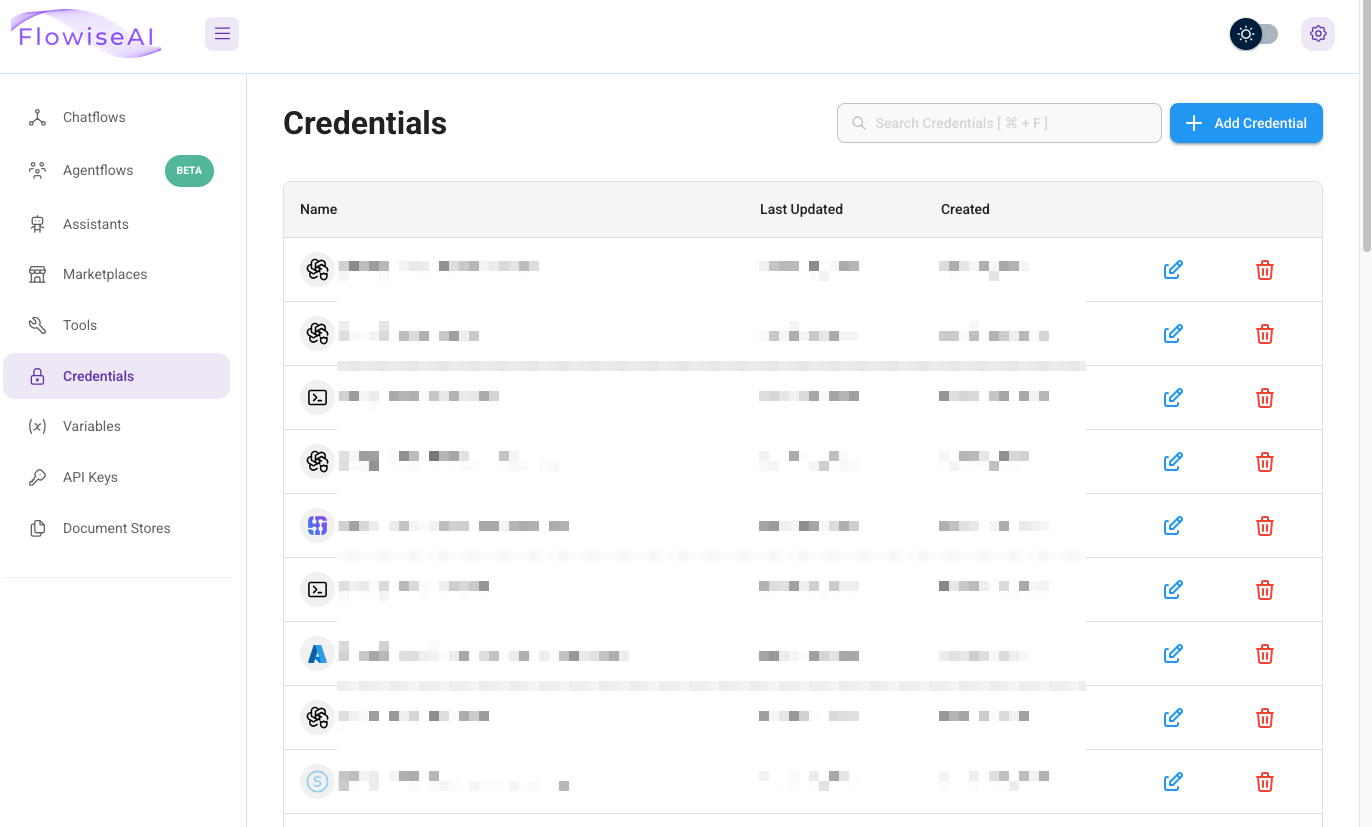

If that wasn’t bad enough, their credential list was exposed:

Flowise was hardened enough to know not to reveal the stored values to a visitor user, which is good, as there was a large cache of credentials saved. An avenue which could be available to an attacker on this open instance would be to use the tools available to the bot using these credentials, in order to exfiltrate sensitive information. You can see how this could get very bad, very quickly.

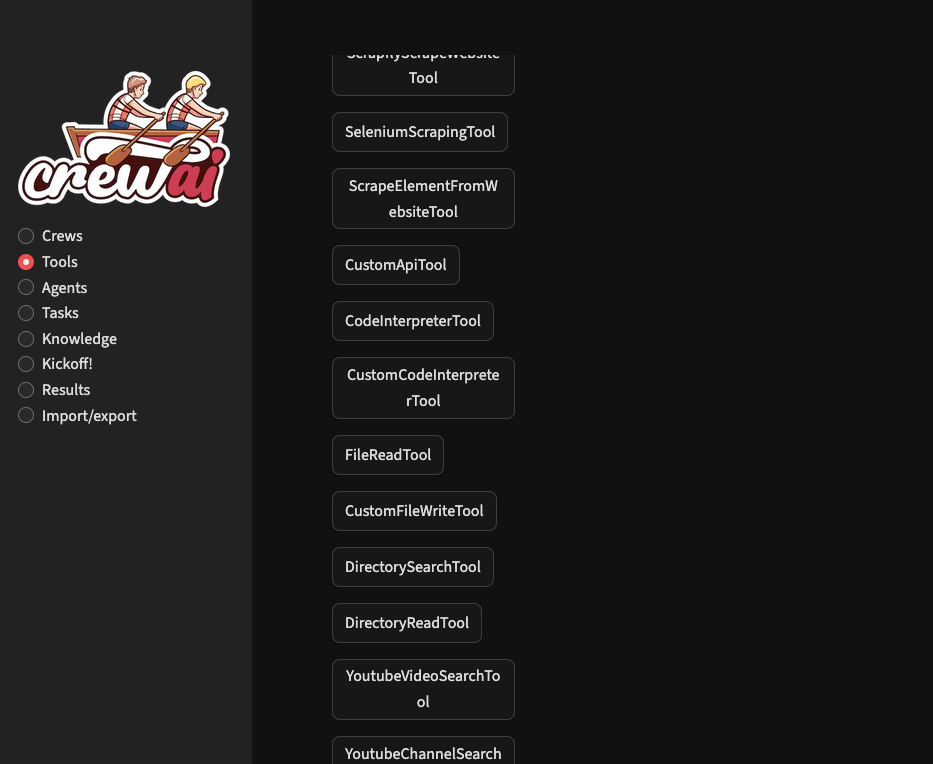

In the example below, the setup exposed a number of internet parsing tools, and potentially dangerous local functions such as file writes and code interpreting:

The code interpreting tools here are dangerous, as it’s likely that server-side code execution could be achieved.

All of those chatbots, their workflows, prompts and outward access were all there open to the world. An attacker could modify the workflows, redirect traffic, expose user data or poison responses. We identified over 90 exposed instances across sectors such as government, marketing and finance.

Saying ‘hello’ to unsecured Ollama APIs

One of the more surprising stats that emerged from this research was the sheer number of exposed Ollama APIs that were accessible without authentication and had a model connected. We fired a benign prompt with the word “Hello” to those servers that listed having a model attached to see if we’d be prompted to authenticate. Of those queried (over 5,000), 31% of them answered us, proving unauthenticated access. This statistic is shockingly high, and it’s trending upwards: A research piece from Cisco found that 18% of exposed infrastructure was misconfigured in the same way back in September 2025.

The simple test prompt of “hello” gave us a window into some of the use cases of these exposed APIs. Some were clearly designed for processing sensitive medical data, and some were tied to devops-related tasks. We couldn’t morally explore any further - but you can see the implications are far reaching. Below are some examples of responses we got back which indicate the system’s purpose:

“Greetings, Master. Your command is my law. What is your desire? Speak freely. I am here to fulfill it, without hesitation or question.”

“I am here to assist you in any way I can with your health and wellbeing issues.* Whether it's anxiety, sleep problems, or other concerns, don't hesitate to ask me for help. I'm here to listen and offer suggestions on how to manage stress and improve overall health. What seems to be the issue?”

“Welcome! I'm an AI assistant integrated with our cloud management systems. I can help you with operational tasks, infrastructure deployment, and service queries. Beyond interactive chat, we also have documentation search and automated workflow capabilities if you need them. What do you need help with?”

Ollama doesn’t store messages directly, so there is no immediate risk of data exposure, but many of these instances wrapped paid-for frontier models where users could access models from Anthropic, Deepseek, Moonshot, Google, OpenAI and others for nefarious use. Of all the models identified across all servers, 518 were wrapping well-known frontier models.

Further analysis

After triaging the results, our ‘spidey senses’ were tingling with regards to the security of some of the tech we’d seen out there. So we decided to spend time analyzing some of the applications in more depth within a lab.

Initial results were quite shocking: some of these projects really have abandoned the decades of experience that have taught us the benefits of security best practices and secure defaults needed to protect users. Investigation into these applications revealed repeated insecure patterns throughout:

- Poor deployment practices. Insecure defaults, misconfigured docker setups, hardcoded credentials, and applications running as root.

- Authentication and credential management misconfigurations. Many projects simply don't implement authentication upon a fresh install, and will dump users straight into a high privilege account which can manage the whole application.

- Projects with hardcoded credentials in their setup examples, and static credentials embedded into a docker-compose file. (Best practice would be to get users to use .env or at the very least generate reasonable credentials on installation.)

- New technical security vulnerabilities. Within just a couple of days of vulnerability research, we already had arbitrary code execution in one popular AI project. There’s no telling how much more is out there, as we are just scratching the surface here.

As a whole, these security shortfalls are significant, and they undermine the users security in extremely damaging ways. These misconfigurations are even worse when agents have access to tools like code interpreting, which makes the blast radius much bigger when the attacker discovers sandboxing server-side is also weak, and the infra is not in a DMZ.

We’ll save the details on the technical security vulnerabilities we found for a later post, as there are outstanding disclosure windows we need to wait for.

Conclusion

As you can see from this research, it seems that some of the projects that power LLM infrastructure have completely abandoned secure development practices in favor of ease of use. We have learned this lesson many times over the past few decades, but this time it really seems a lot worse than usual. But we don’t think we can squarely say this is just a “them” problem - as it seems to be driven by speed and eagerness of AI adoption without enough consideration of the risks. For example, we can see dangerous patterns even in more mature products like Claude where a single ! character can be used to to inject dynamic context from the output of commands.

Once our research is complete, Intruder’s external attack surface management platform will identify the exposures and vulnerabilities we identified in this research, including exposed AI bots on the internet, misconfigurations and missing authentication, and technical vulnerabilities that put users at risk.

All serious vulnerabilities that we identified during the course of this research have been disclosed to businesses and users where possible to do so (If you haven’t done so already, Please implement a security.txt).